Neural Networks

General machine learning algorithms have achieved a great feat in predicting and classifying complex datasets but sometimes fail to do with an accuracy as good as a normal human being. To solve this issue we use Neural Networks, which are like computer models of a human brain.

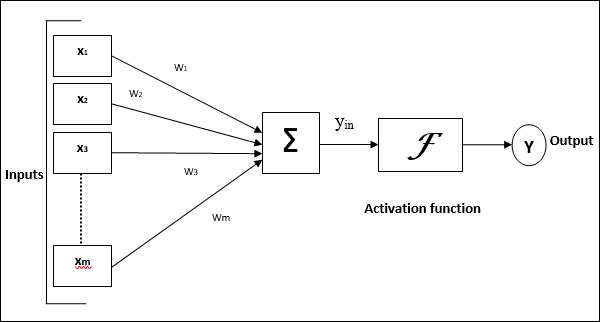

Perceptron: A basic unit in a neural network: Linear separator with n inputs, weights for each input, w1,...., wn. A bias input x0 and associated weight w0.

A neuron has a real-valued output which is a weighted sum of its inputs. If the data is linearly seperable it will converge to a hypothesis that classifies all training data correctly in a finite number of iterations. An activation function is required at each level to move the data in the forward direction.

Perceptrons are very limited. They cannot represent more complex scenarios. A solution to that is multiple layers. Two layers can be used to represent any boolean network.

Back-propagation is a technique of training the neural network. Here is how it happens:

These are generally used in Anamoly detection, Data denoising, image inpainting, etc.

Perceptron: A basic unit in a neural network: Linear separator with n inputs, weights for each input, w1,...., wn. A bias input x0 and associated weight w0.

|

| Weighted sum of inputs |

|

| A threshold function |

A neuron has a real-valued output which is a weighted sum of its inputs. If the data is linearly seperable it will converge to a hypothesis that classifies all training data correctly in a finite number of iterations. An activation function is required at each level to move the data in the forward direction.

Perceptrons are very limited. They cannot represent more complex scenarios. A solution to that is multiple layers. Two layers can be used to represent any boolean network.

Back-propagation is a technique of training the neural network. Here is how it happens:

- We initialize all the weights and threshold levels of the network to random numbers uniformly.

- The data is then moved forward after applying some activation function to it.

- Output vector Y is computed on the output layer.

- The output is then tested with the original output and this information is again fed back to the system and this goes on the number of time we require (this is known as epoch).

Some activation functions are ReLu, Sigmoid, tanh. You can read more about them here.

ANN can get stuck at local minima sometimes and it also suffers from overfitting.

When we start to increase the number of hidden in the ANN model it is known as Deep Learning.

When we start to increase the number of hidden in the ANN model it is known as Deep Learning.

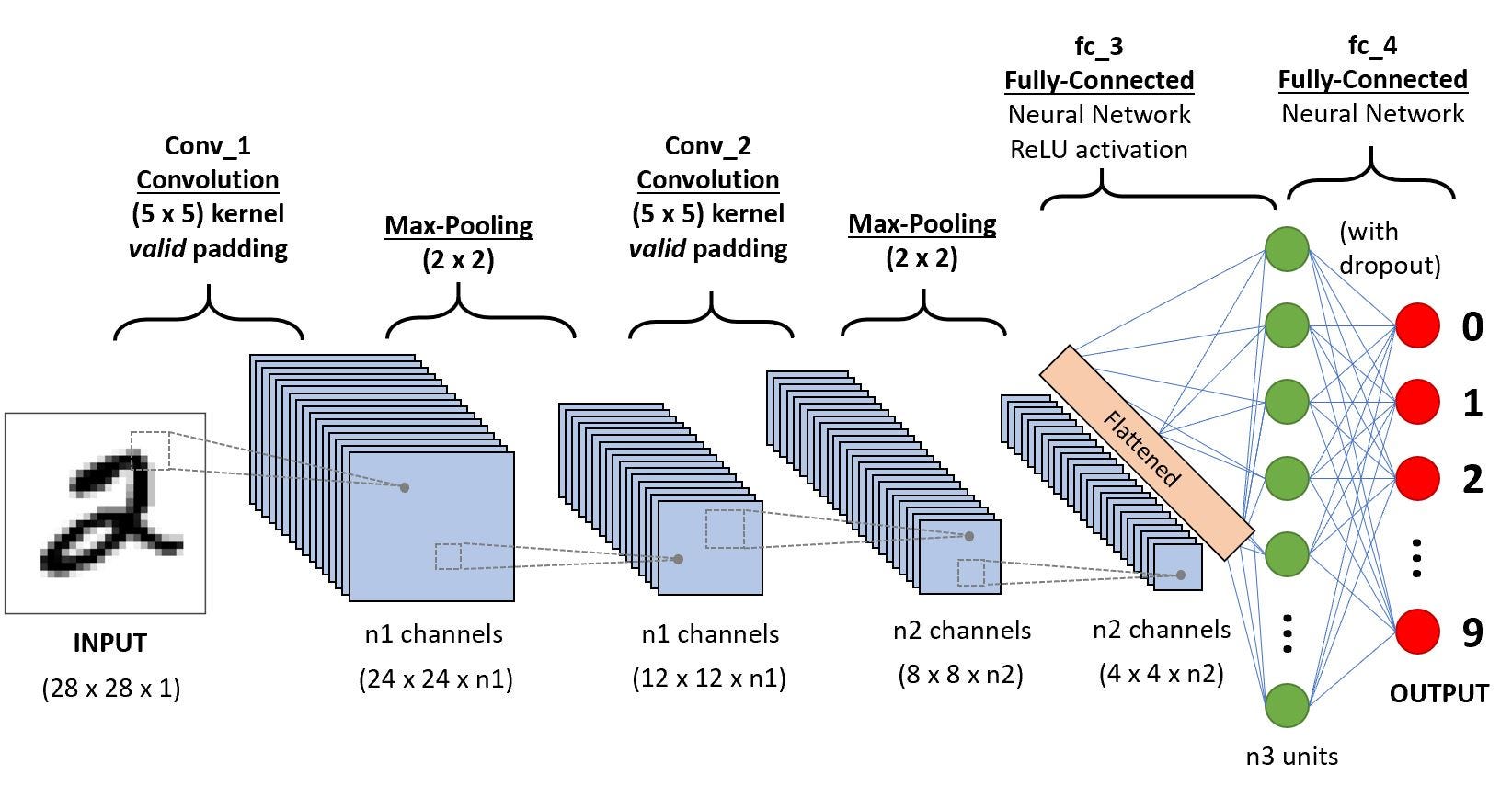

Convolutional Neural Network

It is a neural network for images. It consists of a number of convolutions and subsampling layers. When we Input an image in the Convolutional layer it will apply some filters to it. Filters are different transformations that change the image slightly.

After that Pooling is done. Using the features obtained from the previous layer, Pooling takes small rectangular blocks from the Convolutional layer and subsamples it to produce a single output from that block. The image is then flattened from a 3-dimensional array to a 1D array to be fed forward into the network.

This is followed by a dense connected layer that classifies the image on the basis of the features that it received from the previous layer.

The last layer is the output layer that classifies the image and gives the output.

The last layer is the output layer that classifies the image and gives the output.

AutoEncoder

An autoencoder neural network applies an unsupervised algorithm that applies backpropagation, setting the target values to be equal to inputs. It is trained to output the input.

The Hidden layer in the autoencoder maps the input data into a lower space which leads to compression or encoding. Another layer is involved in decoding or uncompressing the data to retrieve its original form with the desired results.

An ideal autoencoder learns to reproduce original data from the compressed data with minimal loss while not overfitting on the original data.These are generally used in Anamoly detection, Data denoising, image inpainting, etc.

Comments

Post a Comment